Noise-canceling headphones use active noise control technology to reduce external noise, providing a more immersive listening experience. Despite their popularity, users have limited control over what sounds are blocked or allowed through. A team at the University of Washington developed “semantic hearing,” a novel system using deep learning algorithms, enabling users to select specific sounds in real-time through AI-powered headphones.

This groundbreaking technology involves streaming audio to a connected smartphone, allowing users to filter out 20 types of sounds, including sirens, speech, and bird chirps, using voice commands or an app.

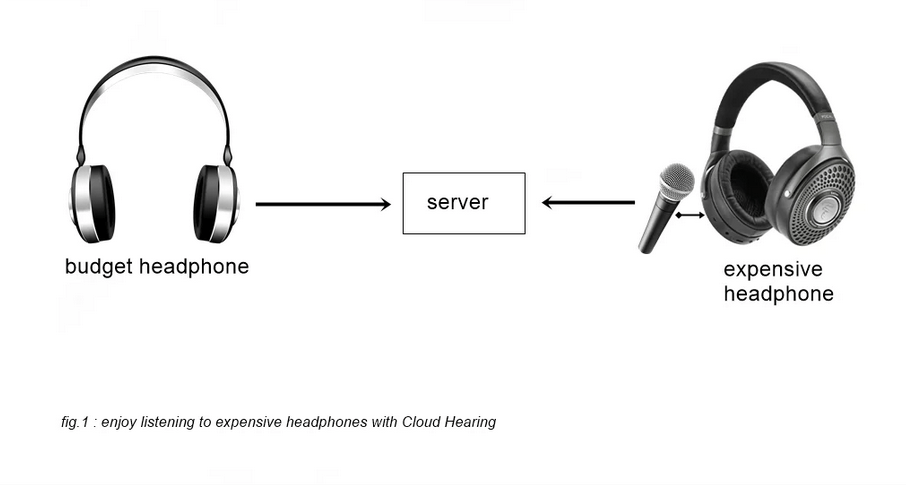

The system, led by Shyam Gollakota, aims to address the challenge of instantaneously isolating and processing specific sounds in real-time, an aspect current noise-canceling technology struggles to achieve. To maintain synchronization with visual senses, the system prioritizes rapid processing, favoring a smartphone-linked process over slower cloud servers.

“Understanding what a bird sounds like and extracting it from all other sounds in an environment requires real-time intelligence that today’s noise canceling headphones haven’t achieved,” said senior author Shyam Gollakota, a UW professor in the Paul G. Allen School of Computer Science & Engineering.

“The challenge is that the sounds headphone wearers hear need to sync with their visual senses. You can’t be hearing someone’s voice two seconds after they talk to you. This means the neural algorithms must process sounds in under a hundredth of a second.”

The system underwent trials in various settings, demonstrating its ability to isolate target sounds while eliminating background noise, garnering positive feedback from participants. However, it encountered difficulty differentiating similar sounds, such as vocal music and human speech. To enhance its performance, the researchers are working on refining the system by diversifying its training data.

The AI-powered headphones, termed “semantic hearing,” represent a significant step toward personalized noise control, allowing users to actively choose the sounds they want to hear, thereby improving their overall listening experience.

Despite some limitations in differentiating similar sounds, the system’s success in isolating specific noises while reducing background noise marks a promising advancement in the realm of noise-canceling technology. The ongoing efforts to refine and train the system further indicate a potential for more accurate and effective sound customization in the future.