In a recent revelation, the Microsoft AI research team accidentally leaked a staggering 38 terabytes of the company’s private data. This mammoth exposure occurred as a result of an oversight during an upload of training data for AI models related to image recognition.

The exposed data comprised complete backups of two employees’ computers, containing highly sensitive personal information such as passwords to Microsoft services, secret keys, and over 30,000 internal Microsoft Teams messages involving more than 350 Microsoft employees.

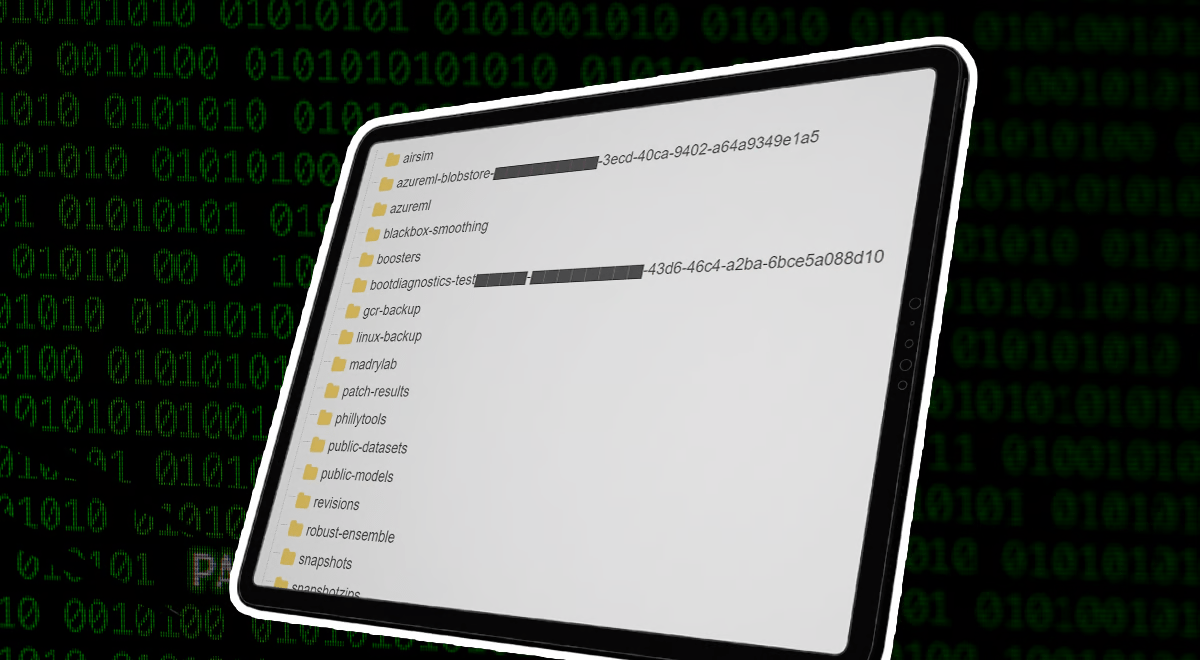

The error occurred when the AI team uploaded a bucket of training data, including open-source code and AI models, to a Github repository. The repository provided a link from Azure, Microsoft’s cloud storage service, for users to download the models. Unfortunately, this link granted unrestricted access to the entire Azure storage account, allowing visitors to view, upload, overwrite, or delete files.

This breach was facilitated by an Azure feature known as Shared Access Signature (SAS) tokens, essentially a signed URL that grants access to Azure Storage data. Typically, SAS tokens can be configured with limitations, specifying which files can be accessed. However, in this case, the link provided by Microsoft’s AI team granted complete access due to improper configuration.

Even more concerning is the timeline of this exposure; the data had been accessible since 2020, potentially exposing critical information for an extended period.

On June 22, Microsoft was instantly informed of the intrusion by cloud security firm Wiz. Two days later, Microsoft invalidated the SAS token, resolving the problem. By August, a study into the scope and potential effects of the leak was finished. Microsoft reassured the public by declaring that no customer data was leaked and that this event did not affect any other internal services. The incident, however, emphasizes how vitally important comprehensive data security procedures are, particularly when handling substantial amounts of sensitive information in the digital environment.