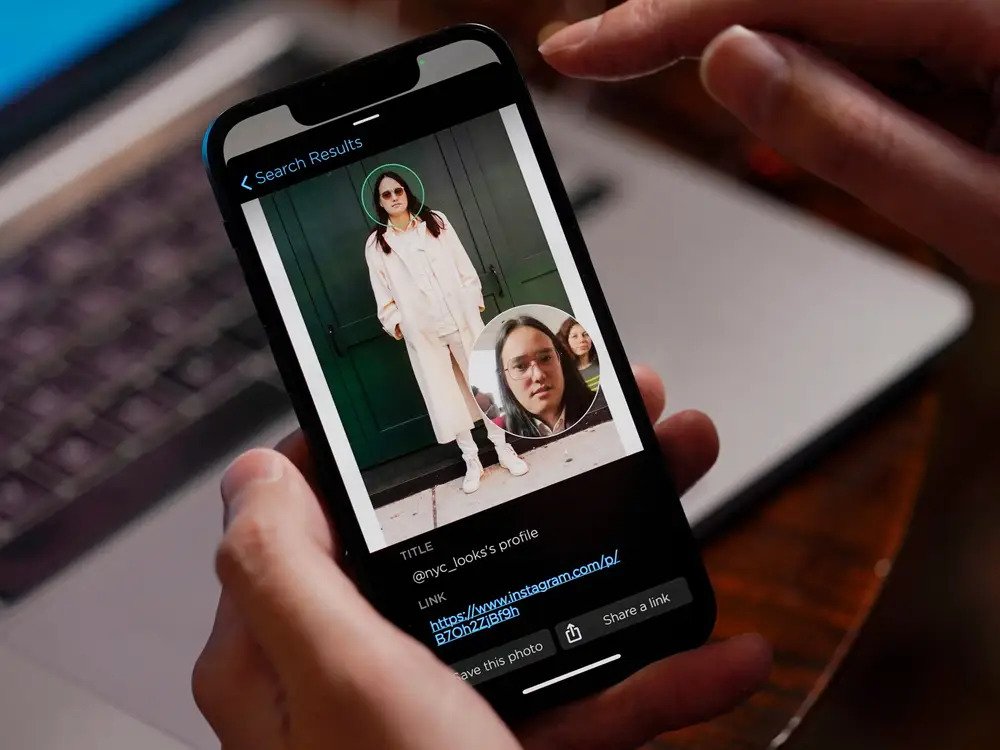

Clearview AI, a company that provides facial recognition technology to police departments in the U.S., has been at the center of controversy for using a database of 30 billion photos scraped from Facebook and other social media platforms without the users’ consent.

This has created a “perpetual police line-up” even for those who haven’t committed any wrongdoing, according to critics. While Clearview AI claims its technology can help identify rioters, save exploited children, and exonerate the wrongfully accused, critics are concerned about privacy violations and wrongful arrests resulting from faulty identifications made by facial recognition, citing cases in Detroit and New Orleans.

In a recent interview with the BBC, Clearview AI’s CEO Hoan Ton-That admitted that the company took photos without users’ knowledge to rapidly expand their database, which they market as a tool for law enforcement agencies to “bring justice to victims.”

In an interview with the BBC, Ton-That confirmed that US law enforcement agencies have accessed Clearview AI’s facial recognition database almost a million times since its inception in 2017. However, the nature of the relationship between law enforcement and Clearview AI remains unclear, and the reported number of accesses could not be independently verified by Insider.

In an email statement to Insider, Ton-That defended the company’s practices, stating that their database is lawfully collected and comparable to any other search engine like Google. He further explained that the database is exclusively used by law enforcement agencies for post-crime investigations and is not available to the general public.

According to Ton-That, every photo in the database could potentially provide crucial evidence that could save a life, deliver justice to an innocent victim, prevent a wrongful identification, or exonerate an innocent person.

Clearview AI creates biometric face prints of individuals and cross-references them with their social media profiles and other identifying information in their database, even if the photos were obtained without consent.

This linking is done automatically, making it difficult for individuals to remove their photos from the database. Although the company has internal policies, they are insufficient to prevent the linking of individuals’ information permanently.

Following a lawsuit by the ACLU under a statewide privacy law, residents of Illinois can opt out of Clearview AI’s technology by providing another photo, which the company claims will only be used to identify which stored photos to remove.

As a result, Clearview AI’s technology has been banned nationwide from being sold to private businesses. However, residents of other states do not have the same option, and the company is still allowed to partner with law enforcement agencies.