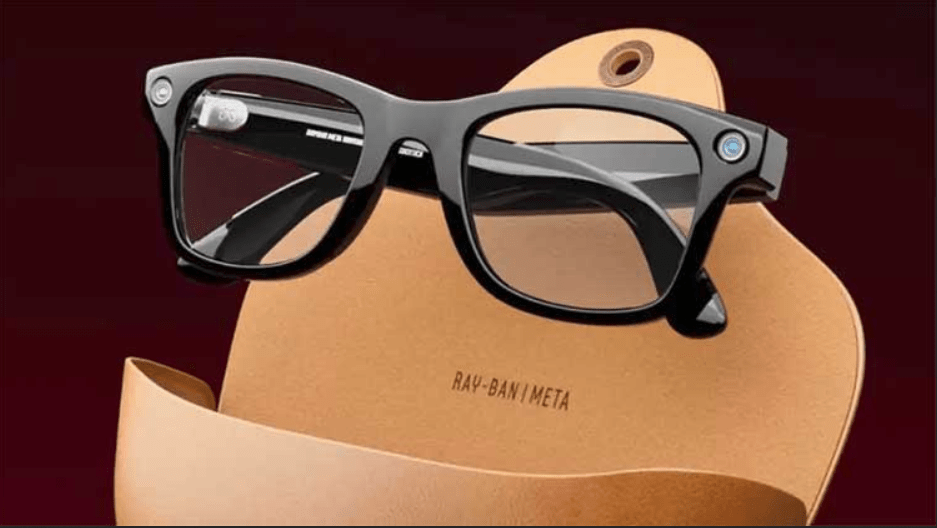

Meta, formerly known as Facebook, is set to enhance its Meta Ray-Ban smart glasses with a groundbreaking upgrade—a “multimodal” AI assistant that utilizes advanced image and audio recognition capabilities. The multimodal AI assistant is designed to respond to user queries based on visual and auditory inputs captured by the glasses’ built-in camera and microphones.

Mark Zuckerberg, Meta’s CEO, showcased the new features in an Instagram reel. The AI assistant demonstrated its versatility by suggesting outfit combinations, translating text, generating image captions, and describing objects within the glasses’ field of view. For instance, Zuckerberg asked the glasses to recommend pants to match a shirt he was holding, and the assistant provided options while describing the color and pattern of the shirt.

Meta’s CTO, Andrew Bosworth, demonstrated additional functionalities, including the AI assistant’s ability to perform common tasks such as translation and summarization. He showcased the glasses accurately identifying a California-shaped wall art and providing information about the state.

These smart glasses are not limited to fashion advice; they serve as a versatile tool for various scenarios. Users can ask for translations of foreign menus, seek information about museum exhibits, and even inquire about the meaning of artworks. The glasses function as a personal assistant, offering real-time assistance in diverse situations.

The early access test for this multimodal AI assistant is currently limited to a select group of tech-savvy individuals in the United States. Despite the exclusivity, excitement surrounding the feature is rapidly spreading, envisioning a future where smart glasses become indispensable concierges, style advisors, language interpreters, and on-the-go information hubs.

However, the AI assistant is still a work in progress with some limitations. It relies on taking a photo of the user’s surroundings, which is then analyzed in the cloud. There is a slight delay between making a voice request and receiving a response, and users must use specific voice commands to trigger both photo-taking and queries. The captured photos and responses are stored in the Meta View app on the user’s phone, offering a convenient way to review and revisit information gathered through the glasses.

In summary, Meta’s multimodal AI assistant for the Ray-Ban smart glasses introduces an innovative way to interact with the world. While still in the testing phase, the potential applications range from fashion advice to language translation, making the smart glasses a promising tool for exploration, learning, and entertainment.